Website Crawling: The Key to Search Engine Visibility and Indexing

Improving website crawling is like uncovering the hidden path to online success.

When you make sure that your website is crawled effectively, you unlock better visibility, higher rankings on search engines, and an influx of organic traffic.

It’s the key to making your online presence shine in the vast digital world.

In this article:

Introduction

In today’s highly competitive online world, search engine optimisation (SEO) plays a vital role in attracting the attention of search engines like Google, Bing, and Yahoo.

Among the various SEO techniques, optimising website crawling is a fundamental strategy that can greatly impact how visible and well-indexed your website is.

So, in the following sections, we’ll explore the inner workings of web crawling, discuss the importance of ensuring that your website is easily crawlable, explore a range of strategies and techniques to optimise website crawling and provide practical tips to achieve highly efficient crawling.

By the time you finish reading this article, you’ll have a clear understanding of how to optimise website crawling and a set of practical strategies to enhance the visibility and indexing of your website.

Let’s embark on this crawling journey and unlock the full potential of your website!

What Is Website Crawling And Why Is It Important For SEO?

Website crawling, also known as web crawling or spidering, is the process through which search engine bots systematically discover and index content on websites.

These bots, often referred to as web crawlers or spiders, navigate through the vast network of interconnected web pages by following link building pitfallss, analysing content, and storing information in search engine databases.

Without proper crawling, your website’s pages may remain hidden from search engine bots, resulting in poor visibility and limited organic traffic.

The importance of website crawling cannot be overstated. It serves as the foundation for search engines to understand and index your web pages, making them eligible to appear in search results when users search for relevant queries.

By optimising website crawling, you can ensure that your valuable content is discovered, indexed, and ultimately displayed to users searching for information, products, or services that your website offers.

How Google Search crawls pages

Decoding Website Crawling

To understand website crawling and its impact on search engine optimisation, it is important to explore the mechanism behind how it works.

Let’s take a closer look at the process and the key steps involved.

How Do Search Engine Bots Discover And Index Website Content Through Crawling?

Search engine bots discover website content by following links from one webpage to another.

They download the page content, analyse it, and index relevant information to make it searchable. Google, for example, takes notes of key signals within the content: from keywords to website freshness.

Through crawling, search engines can identify new pages, update existing ones, and determine the relevance and quality of the content.

The ultimate goal of web crawling is to gather information about these pages and make them accessible in search engine results.

What Are the Web Crawlers Used by the Most Popular Search Engines?

The main web crawlers used by popular search engines include:

- Google: Googlebot

- Bing: Bingbot

- DuckDuckGo: DuckDuckBot

- Yahoo! Search: Slurp

- Yandex: YandexBot

These web crawlers are responsible for indexing web content from across the Internet and providing relevant search results to users.

It’s important to note that there are also many other web crawler bots in addition to those associated with search engines.

How Do Link Categories Influence Search Engine Crawling, Indexing, and Rankings?

When search engine bots crawl web pages, they follow and analyse the links they encounter.

However, the vastness of the internet means that there are billions of web pages to crawl and index, making it a challenging task to keep track of changes and updates.

By categorising discovered links, search engine bots gain valuable insights into the relevance, importance, and freshness of web pages.

This categorisation process involves analysing various factors related to the links, such as the context of the linking page, the anchor text used in the link, the authority of the linking domain, and the historical data associated with the linked pages.

Here are some common types of links:

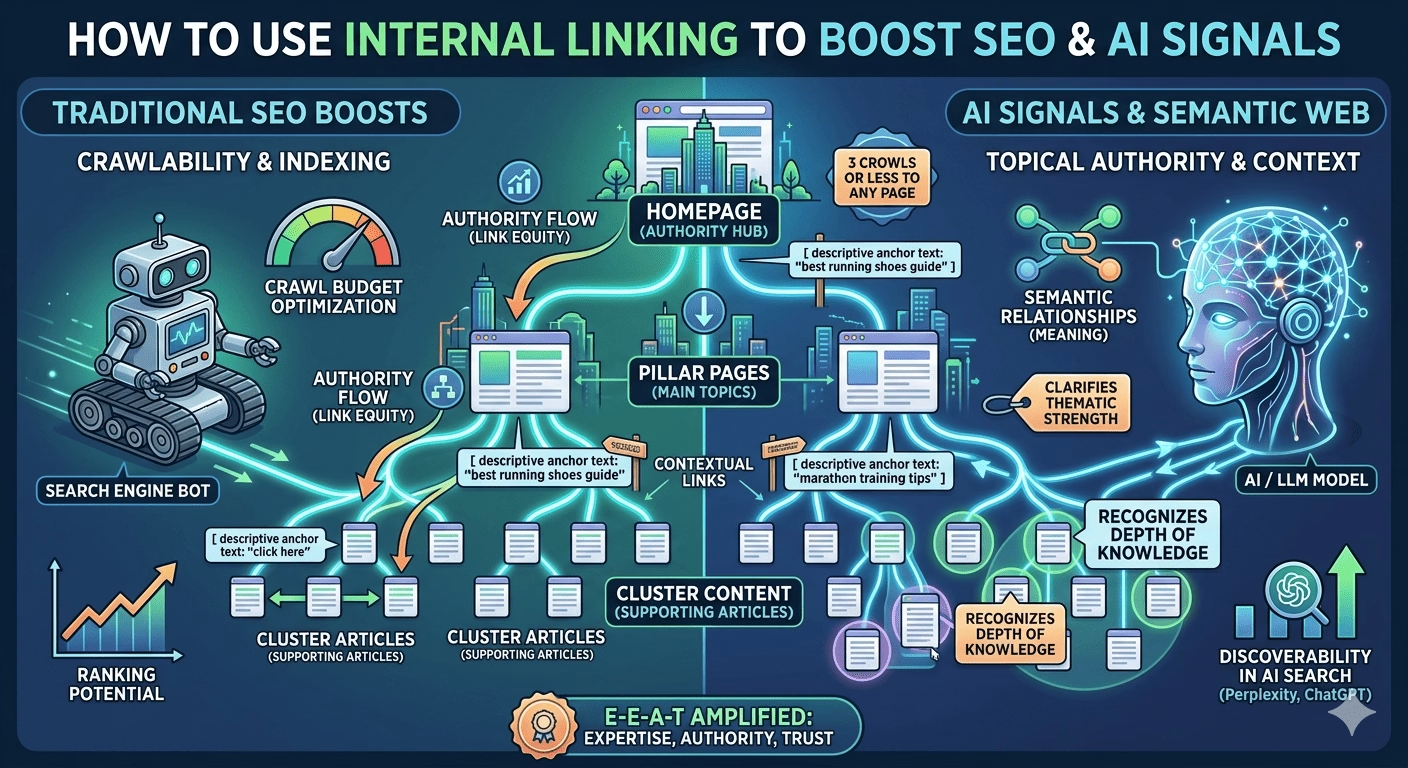

- Internal Links: These are links that point to other pages within the same website or domain. They help search engines understand the structure and hierarchy of a website. Internal links are essential for site navigation and can contribute to the overall SEO (Search Engine Optimisation) of a website.

- External Links: Also known as outbound links, these are links that point to other websites or domains. External links provide additional information or references to the users and can be seen as endorsements or citations. Search engines consider external links as signals of a website’s credibility and authority.

- Backlinks: Backlinks are external links that specifically point to a particular webpage or website. They are created when other websites reference or link to your site. Backlinks are crucial for SEO because search engines view them as votes of confidence. High-quality backlinks from reputable and relevant websites can positively impact a site’s search engine rankings.

- Nofollow Links: Nofollow is an HTML attribute that can be added to a link. When a link has the nofollow attribute, it tells search engines not to pass any authority or “link juice” to the linked page. Nofollow links are commonly used for sponsored content, user-generated content, or to prevent the flow of authority to untrusted or irrelevant websites.

- Dofollow Links: Dofollow links are the opposite of nofollow links. When a link doesn’t have the nofollow attribute, it is considered a dofollow link. Dofollow links allow search engines to follow and pass authority to the linked page. Most standard links are dofollow by default, meaning they contribute to a page’s SEO and ranking.

One common way to categorise links is by evaluating their quality and authority.

High-quality links, such as those from reputable and authoritative websites, are given more weight and considered significant signals of relevance and trustworthiness. These links often indicate that the linked page contains valuable content that should be revisited and potentially ranked higher in search engine results.

Another aspect of link categorisation involves assessing the freshness and recency of the linked pages.

Search engines aim to provide the most up-to-date information to their users, so they prioritise crawling and indexing pages that are frequently updated. By categorising links based on freshness, search engine bots can focus on revisiting and reevaluating pages that are more likely to have new or updated content, ensuring that the search engine index reflects the latest information available.

Additionally, categorising links can help search engine bots identify and prioritise specific types of content.

For example, if a search engine wants to prioritise news articles or blog posts in its index, it may categorise links from news sources or blog directories differently, giving them higher crawling and indexing priority. This enables search engines to better serve users who are looking for the most relevant and timely content in specific categories.

Overall, the process of categorising discovered links empowers search engine bots to make informed decisions about which pages to revisit and update in their index.

By considering factors like link quality, freshness, and content type, search engines can deliver more accurate and up-to-date search results to users, enhancing the overall search experience.

Why Website Crawling Optimisation Matters

Website crawling optimisation plays a crucial role in maximising organic visibility and improving overall SEO performance.

Let’s delve into the reasons why optimising website crawling is of utmost importance.

Ensuring Website Crawlability for Improved Organic Visibility

Website crawlability refers to the ability of search engine bots to discover and navigate through your website’s pages effectively.

When your website is crawlable, search engine bots can index your content and make it visible in search engine results. Here’s why it matters:

- Indexing and Ranking: If search engine bots cannot crawl your website, your pages will not be indexed and will not appear in search results. By optimising website crawling, you increase the chances of your content being indexed and ranked for relevant search queries.

- Expanded Organic Reach: When search engine bots crawl and index your website’s pages, it opens the door for your content to be discovered by a wider audience. Improved crawlability increases the organic reach of your website, attracting more potential visitors and customers.

What is the primary factor influencing Google’s indexing and crawling decisions? According to a recent episode of the ‘Search Off The Records’ podcast, the answer is quality content. Give it a listen!

Transcript of The Effect of Quality in SearchImpact of Speedy Crawling on Time-Limited Content and SEO Updates

In the digital space, time is of the essence. Speedy crawling is particularly critical for time-limited content and timely SEO updates.

Consider the following scenarios:

- Time-Sensitive Content: If your website features time-sensitive content such as news articles, event announcements, or limited-time offers, it is imperative to ensure that search engine bots crawl and index these pages quickly. Speedy crawling ensures that your time-sensitive content reaches your target audience promptly, maximising its relevance and engagement.

- On-Page SEO Changes: When you make significant on-page SEO changes, such as optimising meta tags, improving content structure, or enhancing keyword targeting, effective crawling becomes paramount. The faster search engine bots crawl and index these changes, the quicker you can reap the benefits of your SEO optimisations or rectify any unintended mistakes.

Enhancing Overall SEO Performance through Effective Crawling

Website crawling serves as the foundation of SEO. By optimising the crawling process, you pave the way for improved SEO performance and organic visibility.

Consider the following benefits:

- Better Indexing: When search engine bots crawl your website efficiently, they can index a larger portion of your content. This comprehensive indexing enables search engines to understand the breadth and depth of your website, leading to more accurate search results and better rankings.

- Faster Discoverability: Effective crawling ensures that new content and updates on your website are promptly discovered by search engine bots. This fast discoverability increases the speed at which your fresh content is indexed and displayed in search results, providing timely information to users.

- Optimised User Experience: A well-optimised crawling process improves user experience by enabling visitors to find relevant and up-to-date information on your website. When search engine bots effectively crawl your website, users are more likely to encounter accurate and valuable content, enhancing their overall satisfaction and engagement.

Optimising website crawling is a fundamental aspect of an SEO strategy.

By prioritising crawlability, you can enhance your website’s organic visibility, ensure timely indexing of time-sensitive content, and ultimately boost your overall SEO performance.

Strategies to Optimise Website Crawling

To improve website crawling and enhance search engine visibility, implementing effective strategies is essential.

By doing so, you enable search engine bots to access and understand your content more efficiently, leading to improved indexing, higher rankings, and increased organic traffic.

What are some key strategies that can optimise website crawling and indexing?

1. Optimise Your Server and Minimise Errors

A fast and healthy server response is crucial for efficient crawling. When search engine bots access your website, a slow server response time can hinder the crawling process.

Consider the following tips to optimise server response:

- Optimise Server Configuration: Ensure that your server is properly configured to handle the crawling demands. Work with your hosting provider to optimise server settings and eliminate any bottlenecks.

- Minimise Server Errors: Regularly monitor server logs to identify and address any server errors. Minimising 5xx errors and maintaining a healthy server status improves crawling efficiency.

2. Improve Website Structure and Navigation

A well-structured website with intuitive navigation facilitates efficient crawling. Search engine bots rely on internal links to discover and navigate through your website.

Consider the following recommendations:

- Logical Website Hierarchy: Organise your website content in a logical hierarchy, with broader categories and subcategories. This helps search engine bots understand the structure and hierarchy of your website.

- Internal Linking: Implement a comprehensive internal linking strategy. Ensure that all important pages are linked properly, allowing search engine bots to easily access and crawl them. Use descriptive anchor text for internal links to provide context and relevance.

- Combine duplicate content: Remove duplicates to prioritise crawling unique content over unique URLs.

- Flag deleted pages: Use a 404 or 410 status code for pages that have been permanently removed.

- Remove soft 404 errors: Soft 404 pages will still be crawled, wasting your budget. Check the Index Coverage report for soft 404 errors.

- Watch out for long redirect chains: They have a negative effect on crawling.

3. Utilise XML Sitemaps

XML sitemaps serve as a roadmap for search engine bots, guiding them to important pages on your website. Optimising XML sitemaps can enhance crawling efficiency.

Consider the following best practices:

- Include All Relevant URLs: Ensure that your XML sitemap includes all relevant URLs that you want search engines to crawl and index. Regularly update the sitemap to reflect changes and additions to your website.

- Prioritise URLs: Use priority tags within the XML sitemap to indicate the relative importance of different pages. This helps search engine bots understand the significance of each page and prioritise crawling accordingly.

4. Use Robots.txt and Meta Robots Tags

Robots.txt file and meta robots tags provide instructions to search engine bots regarding which pages to crawl and which ones to exclude. Utilising them effectively can improve crawlability.

Consider the following guidelines:

- Robots.txt File: Use the robots.txt file to control access to specific areas of your website. Specify directories or pages that should not be crawled to focus search engine bots’ attention on relevant content.

- Meta Robots Tags: Use meta robots tags on individual pages to provide specific instructions for crawling and indexing. Options include “noindex” to exclude a page from indexing or “nofollow” to prevent search engine bots from following links on a page.

5. Optimise URL Parameters

URL parameters can impact crawling and indexing, particularly when they generate multiple versions of the same content. Optimising URL parameters can improve crawling efficiency. Consider the following techniques:

- Canonicalisation: Implement canonical tags to consolidate different URL versions of the same content. This helps search engine bots understand the preferred version to crawl and index.

- Parameter Handling: Use URL parameter handling techniques, such as using the “rel=canonical” tag, to control crawling and avoid duplicate content issues.

By implementing these strategies, you can optimise website crawling and improve search engine visibility. Ensuring a fast server response, improving website structure and navigation, utilising XML sitemaps, leveraging robots.txt and meta robots tags, and optimising URL parameters contribute to enhanced crawling efficiency and indexing of your website’s content.

Website Crawling: The Key to Search Engine Visibility and Indexing Share on XPopular Questions about Website Crawling

When it comes to website crawling, there are several common questions that users often search for on Google. Let’s address some of the most popular ones:

How Often Do Search Engine Bots Crawl Websites?

The frequency of website crawling varies depending on several factors, including the authority and activity of the website. Popular and frequently updated websites tend to be crawled more often compared to smaller or less active sites. However, search engine algorithms determine the crawling frequency based on factors such as the website’s crawl budget and the freshness of the content.

What Are Crawl Demand, Crawl Rate, and Crawl Budget?

Crawl demand is how much search engines want to explore and index your web pages. It depends on factors like the relevance, freshness, and popularity of your content. When search engines find your site interesting, they allocate more resources to crawl and index your pages.

Crawl rate is how quickly search engine bots visit your website. It determines how often they send requests to your server to analyse your pages. The crawl rate can vary based on factors like your site’s size, importance, and server performance. Search engines adjust the crawl rate depending on server response times and other factors.

Crawl budget is the number of pages search engines are willing to crawl on your site within a specific time. It sets a limit on the number of pages they’ll explore. Search engines allocate crawl budgets based on factors like your site’s authority, content quality, and server performance. The crawl budget affects how efficiently search engines find and index your pages.

In simple terms, crawl demand is how much search engines want to crawl your site, crawl rate is how fast they do it, and crawl budget is the limit on how many pages they’ll crawl.

Google Crawler Is Crawling My Site Too Often, How Can I Reduce The Crawl Rate?

Sometimes, Google crawling your site can put a heavy load on your infrastructure or lead to unexpected costs if there’s an outage. To solve this, you can reduce the number of requests made by Googlebot.

Can I Control How Search Engine Bots Crawl My Website?

While you cannot directly control how search engine bots crawl your website, you can influence their behaviour through various techniques. Utilising techniques like XML sitemaps, robots.txt files, and meta robots tags can provide instructions to search engines about which pages to crawl and index.

How Can I Ask Google To Recrawl My Website?

To request a crawl of individual URLs, use the URL Inspection tool. If you have large numbers of URLs, submit a sitemap.

What Are The Common Crawling Issues That Websites Face?

Websites may encounter crawling issues that affect their visibility in search results. Some common issues include:

- Blocked Pages: Pages that are blocked by robots.txt files or meta robots tags may prevent search engine bots from crawling and indexing them.

- Duplicate Content: Having duplicate content across different URLs can confuse search engines and impact crawling efficiency.

- Server Errors: If a website frequently experiences server errors or slow response times, it can hinder search engine bots’ ability to crawl and index the site effectively.

How Can I Fix The “Crawled – Currently Not Indexed” Issues Found in Google Search Console?

When Google crawls your page but decides not to include it in the search index, you may encounter the “Crawled but not indexed” issue. This prevents your page from ranking on Google.

However, there are steps you can take to address this problem and improve your chances of getting indexed:

- Ensure Unique and Valuable Content: To fix the issue, focus on providing unique and valuable content that is not automatically generated. Edit and enhance your content to offer more value to users, making it stand out from similar pages.

- Avoid Thin Content: Thin content refers to pages with insufficient text content to adequately explain the topic and provide value to users. To avoid this, ensure your page has substantial and informative content that satisfies users’ needs.

- Implement Canonical Tags: Canonical tags are useful in specifying the main source of content and indicating to Google where the original content resides. This helps ensure that Google correctly indexes the preferred version of your page.

- Include Internal Links: Boost the visibility of the page that is not being indexed by including internal links from other indexed pages on your website. These internal links signal the value and importance of the page to Google, increasing its chances of being indexed.

- Work on Building Backlinks: Create backlinks from reputable websites that are relevant to your page’s topic. These backlinks act as endorsements, indicating to Google the value and credibility of your page. Increased backlinks can improve your page’s chances of being indexed.

- Utilise Social Links: Leverage social media platforms such as X (formerly Twitter) to promote your page and encourage others to share and mention it. This social activity helps demonstrate to Google that your page is relevant and valuable to users.

While implementing these recommendations can increase the likelihood of your page getting indexed, it’s important to remember that Google’s indexing decisions are ultimately beyond your control. Indexing depends on various factors and algorithms that Google employs.

Conclusion

In conclusion, implementing website crawling optimisation strategies is vital for achieving improved visibility and indexing in search engine results.

By ensuring efficient crawling, website owners and professional SEO companies can enhance their organic performance, stay relevant in competitive search landscapes, and drive valuable organic traffic to their websites.

Remember to regularly assess and optimise your crawling practices to adapt to evolving search engine algorithms and user behaviour. Stay proactive, stay optimised, and reap the benefits of effective website crawling optimisation.

How Agile can help

Agile, with its expertise in digital marketing and professional SEO services, stands ready to guide and support your professional services business in executing tailored SEO campaigns, and strategic content marketing.

Our commitment to excellence is underscored by our recognition as a Top Technical SEO Company in the United Kingdom for 2026.

Frequently Asked Questions

What is website crawling and why does it matter for SEO?

Website crawling is the process by which search engine bots (such as Googlebot) discover and scan your web pages by following links. It matters for SEO because a page that isn’t crawled cannot be indexed, and a page that isn’t indexed cannot appear in search results. Ensuring your site is easily crawlable is a foundational technical SEO requirement.

How can I check if Google is crawling my website?

Use Google Search Console’s Coverage report and Crawl Stats to see how frequently Googlebot visits your site, which pages it crawls, and any errors it encounters. You can also check your server logs for Googlebot requests or use the URL Inspection tool to see the last crawl date for specific pages.

What is a crawl budget and should I worry about it?

Crawl budget is the number of pages a search engine bot will crawl on your site within a given timeframe. For most small-to-medium sites (under 10,000 pages), crawl budget isn’t a concern. It becomes important for large sites where you need to ensure search engines prioritise your most valuable pages over low-value ones.

How do I fix crawl errors on my website?

Start by reviewing the errors in Google Search Console. Common fixes include repairing broken internal links, updating or removing outdated redirects, fixing server errors (5xx), ensuring your robots.txt isn’t blocking important pages, and resolving duplicate content issues with canonical tags. Prioritise errors on your most important pages first.

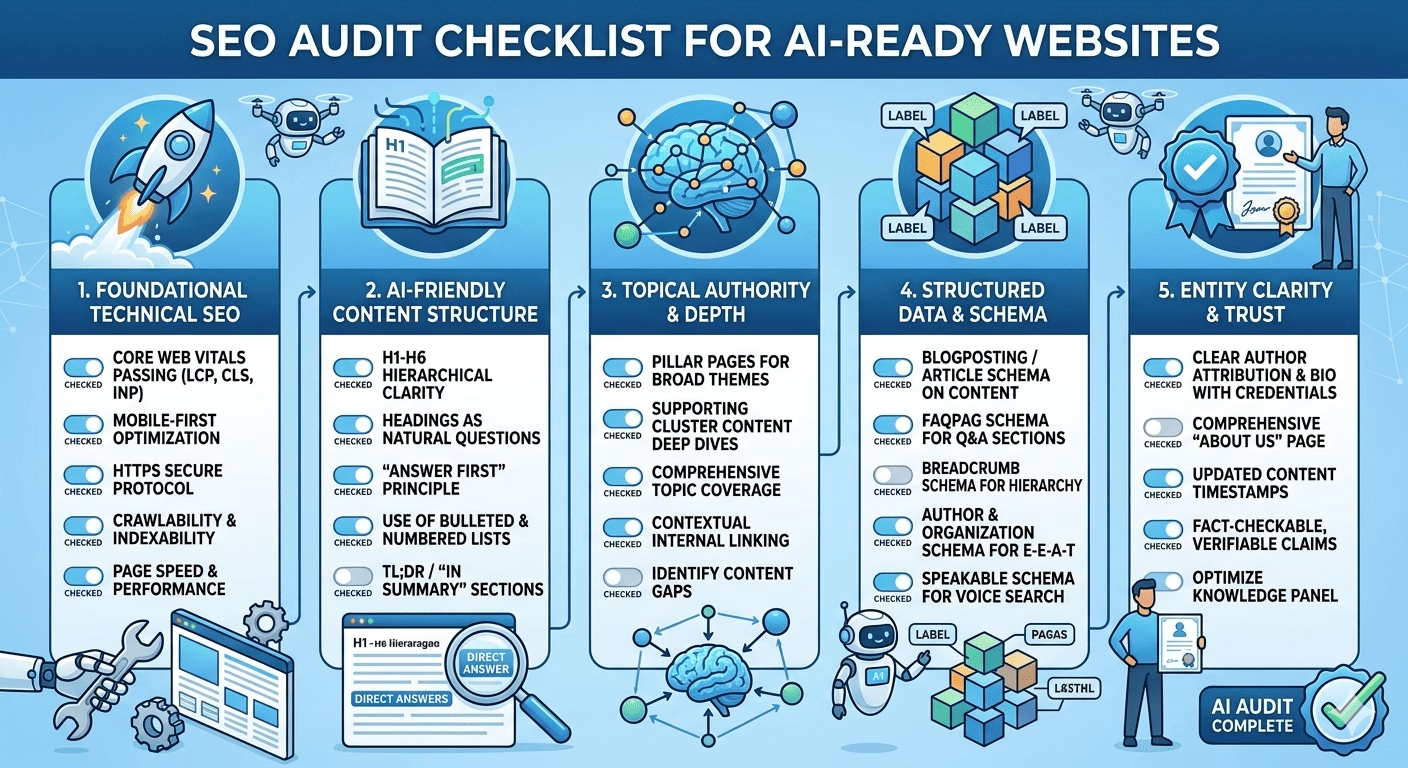

Does website crawling affect AI search engine visibility?

Yes. AI search engines and large language models rely on web crawling to build their knowledge bases. If your pages aren’t crawlable and well-structured, they won’t be included in the training data or retrieval systems that power AI search responses. Clean site architecture, proper schema markup, and fast load times all improve both traditional and AI crawlability.

Related

Articles